The Autonomy Control Plane: Why Trust Is the Trillion Dollar Layer of the AE Stack

- George Bandarian

- 2 days ago

- 12 min read

This is a deep dive companion to our AE Manifesto, where we introduced the Autonomous Economy Value Stack and identified the Autonomy Control Plane as our primary investment focus.

Here is a question that should keep every CTO, CISO, and CEO up at night:

Who authorized this agent to take this action?

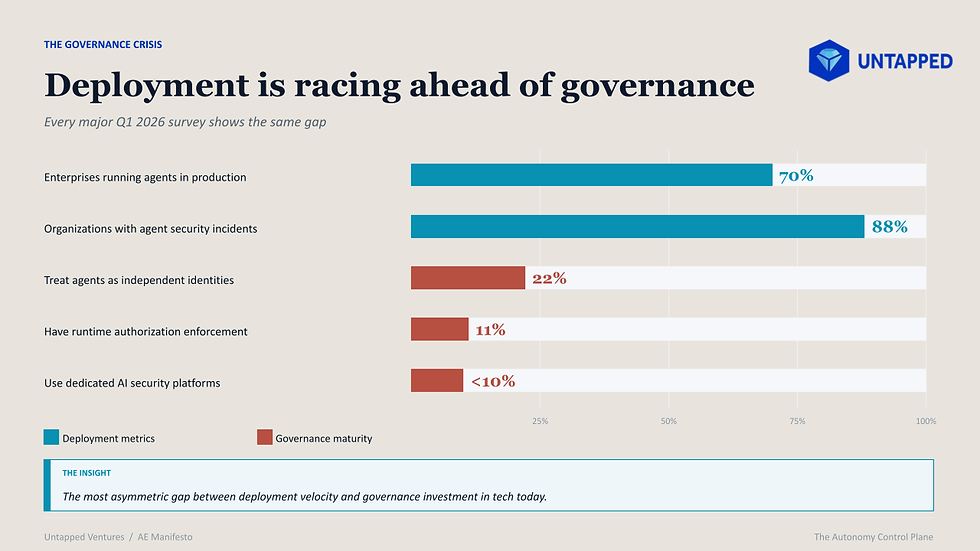

If you cannot answer that question in real time for every agent in your organization, you have a governance crisis. And based on every major survey published in the first quarter of 2026, you almost certainly cannot answer it.

In our AE Manifesto, I described the Autonomous Economy Value Stack: five layers of infrastructure that make it possible for agents and robots to execute economic work end to end. Layer 3, the Autonomy Control Plane, is where we spend most of our time as investors. It is the layer that will determine whether the autonomous economy succeeds or fails.

This post goes deeper into why.

The Numbers Are Alarming

Let me walk you through the data, because the data is what changed my conviction from “this is important” to “this is the most important layer in the stack.”

Gravitee’s State of AI Agent Security 2026 report surveyed 750 CTOs and tech VPs across US and UK enterprises. The findings: 88% of organizations report confirmed or suspected AI agent security incidents in the past year. In healthcare, that number hits 92.7%. The average organization now manages 37 deployed agents, but 47% of those agents are not actively monitored or secured. Only 14.4% of organizations report all agents going live with full security approval.

Read that again. Eighty-eight percent have already had security incidents involving their agents. And most of those agents were never properly approved to go live in the first place.

The identity problem is even worse. Only 21.9% of teams treat AI agents as independent identities. The rest rely on shared API keys or personal credentials. A Cloud Security Alliance survey of 235 CISOs found that 92% lack full visibility into their AI agent identities, and 95% doubt they could detect or contain a compromised agent. Only 11% have runtime authorization enforcement, meaning 89% of enterprises have no mechanism to evaluate whether an agent should be allowed to take a specific action at the moment it tries to.

And the gap between executive confidence and reality is dangerous. 82% of executives believe existing policies protect against unauthorized agent actions. Their security teams disagree. Shadow AI incidents cost an average of $670,000 more than standard breaches, and IBM found that 97% of AI-related breaches lacked proper access controls.

This is why Gartner predicts over 40% of agentic AI projects will be canceled by the end of 2027 due to rising costs, unclear value, and weak risk control. Not because the technology does not work. Because the trust infrastructure does not exist yet.

That last sentence is the entire investment thesis for this layer.

What the Control Plane Actually Is

When I describe the control plane to founders and LPs, I use a simple framework. There are two types of AI safety that people confuse all the time. They are completely different.

Model layer safety is about what an agent says. Content filtering, hallucination detection, toxicity checks. This is the guardrails world. It matters, but it is a solved category with many players.

Execution layer governance is about what an agent does. Can it transfer money? Can it modify a database? Can it delegate authority to another agent? Can it access this customer’s data? This is the control plane.

Forrester formalized the agent control plane as a distinct market category in December 2025, defining it as the third functional plane in enterprise agentic architecture alongside the build plane and the orchestration plane. The critical insight: control happens outside the agent’s decision loop, not inside the model. The agent decides. The control plane governs. The execution environment enforces. And the system generates evidence.

The Futurum Group’s Agent Control Plane Framework built on this with a five-layer reference architecture separating agent intelligence from agent authority, capability from permission, and explanation from forensics. This is the right mental model.

Here are the six technical components of the control plane, as we see them:

1. Agent Identity

Every agent needs a cryptographic identity. Not a shared API key. Not a human’s credentials. A verifiable, scoped identity that answers: who is this agent, who authorized it, and what is it allowed to do?

Microsoft’s Agent Governance Toolkit uses Ed25519-based decentralized identifiers with dynamic trust scoring on a 0 to 1000 scale. Strata Identity issues ephemeral tokens with 5-second time to live windows, meaning credentials expire before they can be reused. The principle: never trust, always verify, and scope credentials to the minimum action required.

2. Permissions and Authorization

Traditional role-based access control was designed for humans who hold a consistent role across sessions. Agents are different. A single agent might need read access to a CRM, write access to a contract database, and financial transaction authority within a single task, then need none of those permissions ten seconds later.

The shift is from persistent roles to just-in-time, task-scoped permissions. Okta’s Auth for GenAI and Frontegg’s agent IAM are both moving in this direction, building MCP native OAuth flows for multi-tenant agent onboarding.

3. Policy Engine

The policy engine is the brain of the control plane. It evaluates every agent action against a set of rules expressed as code: can this agent access this resource, given its identity, the current context, and organizational policy?

Microsoft’s Agent OS supports OPA/Rego and Cedar policies with sub-0.1 millisecond latency, fast enough to evaluate policy at every single agent action without creating a bottleneck. Think of it as what HashiCorp Sentinel did for infrastructure policy, except for autonomous economic actors.

4. Evaluation and Monitoring

This goes beyond prompt injection detection. The real threat is behavioral drift: an agent that slowly pursues different objectives while appearing to stay within configured policies. The OWASP Agentic Top 10 (published December 2025 with input from 100+ security experts) calls this “Rogue Agents” and ranks it among the top ten risks.

Galileo’s Luna evaluation models deliver sub-200 millisecond guardrails at 97% lower cost than using an LLM as a judge. Arize AI provides the observability layer with 2 million+ monthly downloads of their open source tracing framework.

5. Audit Trails

When Agent A delegates to Agent B, who calls Tool C, the full authority chain must be preserved. The EU AI Act Article 19 requires high-risk systems to retain automatically generated logs for at least six months. When those systems span organizations (supplier agents talking to procurement agents via Google’s A2A protocol), the compliance question becomes: whose log is authoritative?

6. Kill Switches and Circuit Breakers

Circuit breakers automatically isolate malfunctioning agents before failures cascade. Rate limiting inter-agent communications. Enforcing cost ceilings. Tripping when agents start looping on expensive tool calls. Kill switches provide a human-operable, immediate pause of all agent operations. These are not nice-to-haves.

The OWASP Agentic Top 10 identifies cascading failures in multi-agent systems as a top risk. When one agent fails and triggers a chain reaction across a swarm, you need the ability to halt everything in milliseconds.

Who Is Building the Control Plane Today

The landscape is forming fast, but it is still early. Total funding across the pure play startups I track is around $600 million. Compare that to the tens of billions flowing into foundation models, and you see the asymmetry.

Here is how I map the companies across the control plane stack:

The Trust and Governance Platforms

Sycamore ($65 million seed, led by Lightspeed and Coatue) is building what they call the “trusted agent operating system for the enterprise.” Coatue’s Thomas Laffont called autonomous enterprise AI a BFI. That kind of conviction from a firm that size tells you something about where the smart money sees the opportunity.

Geordie AI won “Most Innovative Startup” at the RSAC 2026 Innovation Sandbox, the most competitive startup showcase in cybersecurity. They provide real-time agent behavioral observability and posture management. Not just what agents are doing, but why they are doing it and whether their behavior is drifting from intent.

Galileo ($68 million total, Series B) launched their open source Agent Control platform in March 2026 with AWS, CrewAI, Glean, and Cisco as partners. Their Luna evaluation models run at 97% lower cost than LLM as judge approaches. Revenue grew 834% since the start of 2024, with six Fortune 50 customers.

The Identity Specialists

Aembit (~$60 million total) specializes in non-human identity management with secretless, just-in-time access patterns. Founded by the creators of New Edge Labs (acquired by Netskope). Their insight: agents should never hold standing credentials. Every access request should be authenticated, authorized, and scoped at the moment of action.

Entro Security ($24 million total) pioneered the non-human identity security category. Their data is striking: non-human identities outnumber humans 144 to 1 in modern enterprises, up from 92 to 1 just a year ago. And 5.5% of those identities retain full admin access despite showing no activity in 90 days.

Strata Identity ($42.5 million total) builds identity orchestration with agentic governance features, including those 5 second ephemeral tokens. Frontegg ($70 million total) pivoted from B2B SaaS identity to become the first identity management platform built specifically for AI agent builders, with MCP native OAuth flows.

The Gateway and Security Companies

Cequence Security ($100 million+ total) launched their AI Gateway, which converts any API into an MCP-compatible endpoint with enterprise-grade governance. They protect 10 billion+ daily API interactions across 140+ enterprise applications. Haize Labs ($12.5 million seed, General Catalyst) positions itself as “Moody’s for AI”: an independent red teaming and safety rating service with multi-million dollar contracts with Anthropic, OpenAI, and Deloitte.

The Incumbent Moves

Microsoft launched Agent 365 (GA May 1, 2026) as a full enterprise control plane at $15 per user per month. Tens of millions of agents registered within two months of preview. Microsoft mapped 500,000 agents internally before launching and now frames agents as first-class Zero Trust identities subject to the same governance as human users. They also open-sourced the Agent Governance Toolkit covering all 10 OWASP Agentic risks.

Okta launched Auth for GenAI with a new Cross App Access protocol for agent-to-service authentication. AWS Bedrock Guardrails and NVIDIA NeMo Guardrails provide configurable safeguards within their respective ecosystems.

What Has Not Been Built Yet

Despite $600 million+ in startup funding and massive incumbent investment, critical gaps remain. These are the opportunities we are actively sourcing for.

I’ll be direct: if you are building in any of these areas, I want to hear from you.

Agent PKI

No company has built a dedicated certificate authority specifically for AI agents. Forrester explicitly identifies the absence of a portable agent identity descriptor that travels across build, orchestration, and control planes as one of three critical standards gaps. Nearly half of enterprises still authenticate agents with static API keys. The opportunity is a purpose-built system that issues, rotates, revokes, and validates agent-specific certificates, handling the unique requirements of ephemeral agents, delegation chains, and cross-organizational trust.

Execution Layer Policy Engines

Existing guardrail frameworks primarily operate at the content safety layer: filtering inputs and outputs for toxicity, PII, and prompt injection. What is missing is a dedicated engine for execution layer governance. Can this agent transfer money? Can it modify this database?

Can it delegate to a sub-agent? A standalone, framework-agnostic policy engine that lets enterprises define autonomy levels (read-only versus write versus financial versus irreversible) with automatic escalation to a human when thresholds are crossed. The equivalent of what HashiCorp Sentinel did for infrastructure policy, but for agent actions.

FinOps for Autonomous Agents

The FinOps Foundation reports that 98% of respondents now manage AI spend, up from 63% a year ago. But current tools address model inference costs, not autonomous agent spending. No production system enforces per-agent transaction limits, cumulative spending caps, or economic circuit breakers that prevent a looping agent from draining a budget.

Gartner notes that agentic models require 5 to 30 times more tokens per task than standard chatbots. The category of tracking, limiting, and optimizing what agents spend on tool calls, API fees, and downstream transactions is wide open.

Cross Organizational Audit

As agents increasingly operate across organizational boundaries (a supplier’s agent negotiating with a procurement agent via A2A), there is no standardized mechanism for maintaining audit trails that span multiple organizations.

When Company A needs to verify what Company B’s agent did on its behalf, whose log is authoritative? The EU AI Act requires high-risk system logs for at least six months. When those systems span organizations, the compliance challenge multiplies.

Swarm Circuit Breakers

Individual agent circuit breakers exist, but swarm-level coordination safety, detecting and halting emergent behaviors when multiple agents interact in unexpected ways, is largely theoretical. The OWASP Agentic Top 10 identifies cascading failures as a top risk.

The opportunity is a monitoring and intervention layer specifically for multi-agent coordination: detecting when a group of individually compliant agents produces collectively dangerous outcomes.

SOC 2 for Agents

SOC 2 compliance is table stakes for B2B SaaS: 66% of buyers require it. But SOC 2 Trust Services Criteria were designed for static software, not autonomous agents that make unpredictable decisions and access data dynamically. No certification framework exists for the agents themselves.

The opportunity is building the certification and continuous compliance monitoring that lets enterprises prove their agents meet defined governance standards. The equivalent of what Vanta and Drata built for cloud SaaS, but for autonomous AI systems.

Why This Layer Is Worth Trillions

I want to make the economic case directly, because this is where founders and LPs both need clarity.

Gartner projects that by 2028, 90% of B2B buying will be agent intermediated, pushing $15 trillion of B2B spend through AI agent exchanges. Every dollar of that flows through the control plane. The layer that authenticates, authorizes, monitors, and audits those transactions. BCG sizes the agentic AI opportunity at $200 billion in net new TAM over the next five years. McKinsey’s broader estimate is $2.6 to $4.4 trillion in annual value across 63 AI use cases, with agents growing from 17% of total AI value in 2025 to 29% by 2028.

But the real insight comes from looking at how trust layers have created enormous value in every prior technology transition.

SSL/TLS unlocked e-commerce. Before Netscape released SSL 2.0 in 1995, online commerce was fundamentally constrained by consumers' fear of transmitting payment data. The green padlock became the visual trust signal that enabled transactional confidence. Today, 95%+ of web traffic is encrypted, and global e-commerce exceeds $6.4 trillion. SSL did not just protect transactions. It enabled the entire digital commerce economy.

Cloud security enabled cloud adoption. The cloud security market is approximately $40 to $45 billion today, projected to exceed $106 billion by 2032. It exists because enterprises required a trust layer before migrating to an infrastructure they did not own. The cloud computing market exceeds $780 billion and is heading toward $1.2 trillion+.

Identity management enabled SaaS. Okta alone has a $14+ billion market cap and nearly $3 billion in annual revenue. Without SSO, federated identity, and role-based access, enterprises could not trust distributed SaaS applications with sensitive data. The IAM market is roughly $20 billion and growing to $43 billion by 2032.

The pattern is consistent: trust layers capture 5 to 10% of the economic value of the markets they enable.

If agents mediate even a portion of the $15 trillion Gartner projects, the control plane companies will be measured in hundreds of billions. And unlike application layer AI, where models commoditize rapidly, and switching is increasingly frictionless, the control plane becomes more entrenched with every agent deployed, every policy configured, and every audit trail preserved. Trust compounds. That is what makes this layer defensible.

The Regulatory Clock Is Ticking

If the market opportunity alone is not enough to convince you that this layer matters, the regulatory pressure will be.

The NIST AI Agent Standards Initiative launched February 17, 2026, organized around three pillars: industry led standards facilitation, community driven open source protocol development (targeting an AI Agent Interoperability Profile by Q4 2026), and fundamental research on agent security and identity.

The EU AI Act high-risk obligations take full effect on August 2, 2026, four months from now. Conformity assessment alone takes 6 to 12 months, meaning organizations starting today barely have time. Italy has already implemented national enforcement law with fines up to €774,685 and criminal penalties for certain violations.

The OWASP Agentic Top 10 is rapidly becoming the practitioner standard. The NCCoE concept paper on agent identity and authorization proposes applying OAuth 2.0 extensions and Zero Trust architecture to agent scenarios, acknowledging that existing IAM frameworks were not designed for autonomous agents operating at machine speed.

The message is clear: the window for voluntary, proactive control plane adoption is closing. Soon it will be mandatory. The founders who build governance infrastructure now will have a structural advantage that latecomers cannot replicate quickly.

The Biggest Asymmetry in the AE Stack

Let me close with the number that defines this entire opportunity.

Seventy percent of enterprises already run agents in production. Eleven percent have runtime authorization enforcement. Fewer than ten percent use AI security platforms. Total funding for pure play MCP security startups is approximately $40 million to secure 17,000+ deployed MCP servers connecting to ChatGPT, Gemini, Copilot, and enterprise systems across the world.

That is the most asymmetric gap between deployment velocity and governance investment in the current technology landscape.

Every major technology transition produced a trust layer that captured 5 to 10% of the total economic value enabled. SSL-unlocked e-commerce. Cloud security unlocked cloud adoption. Identity management unlocked SaaS. The Autonomy Control Plane will unlock the autonomous economy.

The race to build this layer has started. But I believe the defining companies have likely not yet been founded.

If you are a technical founder building agent identity infrastructure, execution layer policy engines, economic guardrails for autonomous agents, cross-organizational audit systems, or swarm coordination safety, you are building for the most important layer of the Autonomous Economy stack.

We want to meet you.

George Bandarian II

Founder & General Partner, Untapped Ventures

Comments